TL;DR

- Only 34% of organizations are truly redesigning workflows around AI — the other 66% are running demos.

- Real ROI comes from agentic AI, not chatbots: systems that decide and act, not just respond.

- 95% of GenAI pilots stalled before production. The problem is always data, not the model.

- This guide covers the highest-value use cases by industry, with sourced numbers and a 4-stage framework to move from pilot to production.

What Does Generative AI Actually Mean in an Enterprise Context?

Generative AI refers to machine learning models that produce new content — text, code, images, or structured data — based on patterns learned from existing information. Unlike traditional AI that detects or classifies, generative AI creates, drafts, synthesizes, and in its most advanced form, executes.

I tell business leaders to stop anchoring on “chat interfaces” and start anchoring on autonomous workflows. The most valuable enterprise AI deployments in 2026 are agentic systems: AI that reasons across data sources, makes decisions, and takes action inside complex processes — with a human auditing the result, not initiating every step. That is a fundamentally different operating model from a chatbot.

McKinsey’s analysis estimates that generative AI could add $2.6 trillion to $4.4 trillion annually to the global economy across 63 use cases, equivalent to about 15-40% more value from all AI technologies. What has changed in 2026 is that this figure is moving from projection to measurement in organizations that have crossed from experimentation into production. The companies generating real returns are not the ones exploring AI. They are the ones restructuring specific workflows around it.

Quick Answer

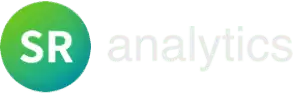

Generative AI use cases are specific business applications where AI models produce new content, automate multi-step workflows, or synthesize decisions — distinct from traditional AI, which only classifies or predicts existing data. Enterprise use cases span healthcare documentation, financial fraud detection, manufacturing design, marketing personalization, and software development.

Healthcare: How Is Generative AI Changing Clinical Operations?

Generative AI in healthcare has moved from departmental pilot to foundational infrastructure. The most mature health systems now carry AI as an operational budget line, not an R&D experiment.

The use case with the fastest clinician adoption is ambient clinical documentation. AI listens to patient-physician conversations and converts them into EHR-ready notes in real time. KLAS Research’s 2025 Ambient Speech Outcomes report highlights how ambient AI tools help providers reduce charting time, enabling more face-to-face patient interaction and less after-hours documentation, often called ‘pajama time’ by clinicians. Fixing it is not a productivity metric. It is a burnout metric, and it directly impacts retention and care quality.

Beyond documentation, the high-value applications are in diagnostics and drug discovery. AI-assisted imaging is outperforming solo-clinician reads in peer-reviewed radiology trials. Generative drug discovery models are compressing compound discovery timelines from roughly ten years to eighteen months by predicting efficacy and toxicity before a single lab test runs.

The organizations advancing fastest have resolved one blocker that stalled most early pilots: data residency. Protected Health Information cannot leave compliant environments. Sovereign AI infrastructure — compute that stays within regulatory boundaries — has made production deployment viable where cloud-based approaches failed. Mayo Clinic’s capital commitment to AI as recurring infrastructure, rather than a series of one-off projects, reflects exactly this maturity shift.

Financial Services: What Are Banks and Firms Actually Deploying at Scale?

Financial services moved into serious AI deployment faster than almost any other sector. The data is structured, regulatory expectations are high, and a single successful application can close the ROI case immediately. One prevented breach, one optimized trade, one avoided compliance failure — the math closes quickly.

The most urgent deployment in 2026 is fraud detection. A 900% surge in deepfake-driven fraud since 2023 has made legacy signature-based systems inadequate. According to Palo Alto Networks’ State of Cloud Security Report 2025, 99% of organizations experienced at least one attack on AI apps and services in the past year, with rapid AI adoption expanding cloud attack surfaces as security teams struggle with insecure code volumes.

The other enterprise use cases for generative AI in finance, generating documented returns:

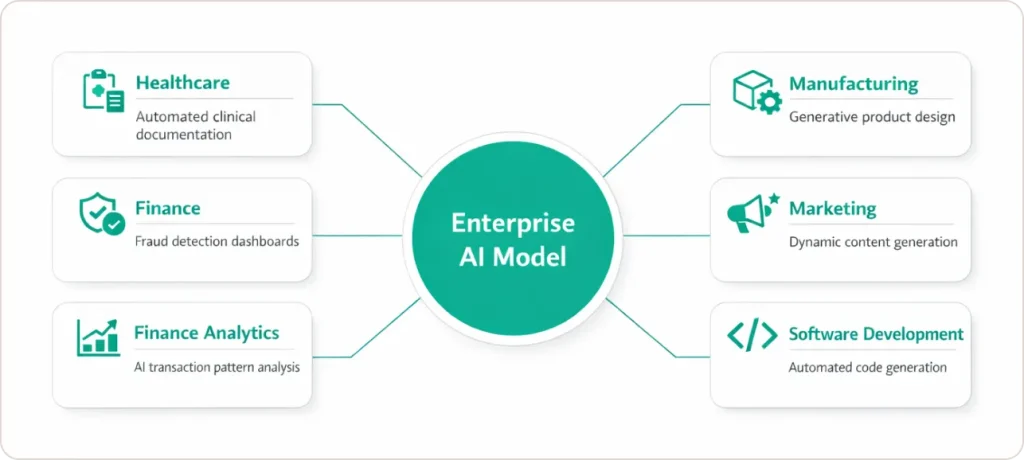

Automated Financial Reporting. Generative AI drafts earnings summaries, risk reports, and regulatory filings from structured data. Analysts shift from document production to judgment and exception review. Deloitte’s 2026 State of AI in the Enterprise report projects that over 50% of standard financial reports will be AI-generated within two years, a structural change to how financial communications teams are sized.

Portfolio Optimization. Agentic AI continuously monitors market signals, client risk parameters, and macroeconomic indicators, rebalancing portfolios without waiting for a human to initiate the action. Human oversight remains at the governance layer, not the execution layer.

Code Modernization. Goldman Sachs, JPMorgan, and comparable institutions are using AI to translate legacy COBOL systems into modern languages — a task that previously required rare specialist knowledge and multi-year timelines. This is infrastructure renewal at scale, not a pilot.

Manufacturing: How Is Generative AI Operating on the Physical Shop Floor?

Manufacturing is where generative AI becomes Physical AI — integrated with robotics, sensors, and CAD tools operating in the production environment itself, not just running analytics on a screen.

The clearest example is generative design. Engineers enter performance goals, material constraints, and cost parameters. The AI generates hundreds of part configurations satisfying those requirements. General Motors has applied this to vehicle component design, producing parts that are lighter and structurally stronger than what traditional CAD workflows produced, not incrementally better, but categorically different outcomes.

Predictive Maintenance with Root Cause Narratives. Traditional anomaly detection tells you something is wrong. Generative AI tells you why — producing plain-language explanations of failure root causes from sensor data. Organizations running this in production report 30–35% reductions in unplanned equipment downtime. Maintenance teams act on an explanation, not an alert.

Synthetic Sensor Data for Rare Failure Modes. This is the most underappreciated use case in manufacturing AI, and no competitor covers it directly. Catastrophic failures do not happen frequently enough to build a real training dataset from actual incidents.

Generative models create synthetic sensor patterns for rare failure events, improving model sensitivity without waiting for the real thing. This is why some predictive maintenance models outperform others significantly — not model architecture, but training data completeness.

Digital Twins. Running simulations on production line configurations before physical changes are made. Organizations at scale report up to 70% reductions in assembly process failure rates. DP World uses AI-driven routing to reduce port asset downtime in real-time — supply chain optimization that responds to conditions, not schedules.

Retail: How Are Retailers Using Generative AI Beyond Recommendations?

The basic recommendation engine is table stakes. The 2026 retail frontier is conversational and visual commerce — AI acting as a personal stylist or interior designer, not a filtered search bar.

Walmart’s integration is the most complete example: a shopping assistant, generative search, and a virtual room design feature that lets customers visualize products in their space before purchase. Amazon and Snapchat have enabled visual search at scale — photograph something in the real world, receive product matches via vector search in seconds. The friction from intent to purchase drops when customers do not need to translate a visual idea into keyword strings.

On the operations side, dynamic inventory management runs autonomously at leading retailers. Predictive engines ingest demand signals continuously and trigger restocking without human initiation. Virtual try-on is reducing return rates in apparel and furniture for retailers where returns represent 15–30% of gross merchandise volume. This is a cost structure improvement, not a UX feature.

Software Development: What Are the Real GenAI Use Cases for Engineering Teams?

AI tools now generate 82% of initial code in leading engineering organizations. The developer’s role has shifted toward architecture decisions, security review, and defining what the AI should build — not writing syntax. Small teams are now shipping enterprise-grade products at speeds that large teams could not match two years ago.

Agentic DevOps. Autonomous agents monitor infrastructure, detect anomalies, and initiate remediation for routine incidents without human intervention. Engineers handle novel failures and architectural decisions. Routine operations run on autopilot with human oversight at the exception layer.

Code Modernization. AI-assisted translation is making legacy system modernization economically viable for systems that previously required multi-year specialist engagements. Goldman Sachs and JPMorgan are running this in production today.

Low-Code Development for Business Teams. Platforms combining generative AI with natural language interfaces allow non-technical teams to build functional internal tools. The governance risk is real — shadow IT and insufficient security review are the two most common failure modes. Organizations building governance frameworks before broad deployment avoid the cleanup costs that consistently follow unmanaged rollouts.

The challenge is no longer just building AI, it’s replatforming fragmented security into cohesive, best-of-breed platforms that accelerate outcomes.” — Anand Oswal, EVP, Palo Alto Networks (RSA Conference 2025)

What Are the Most Valuable Generative AI Marketing Use Cases?

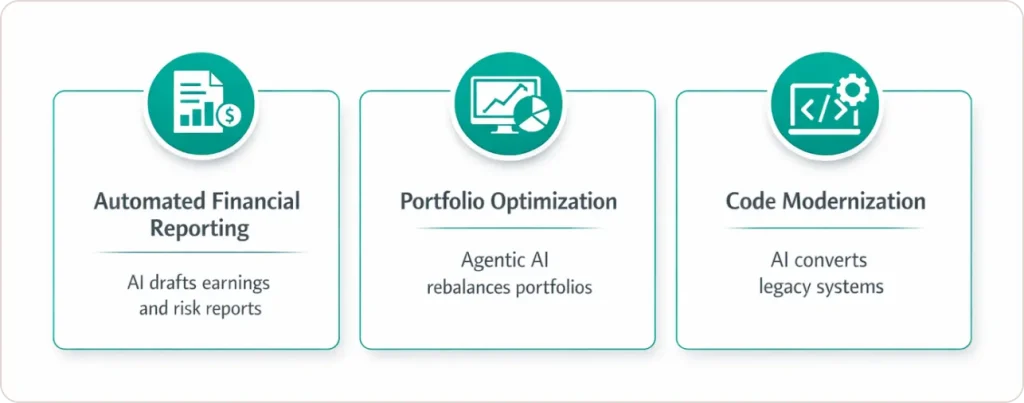

Generative AI marketing use cases cover content production at scale, hyper-personalized campaign delivery, and real-time brand intelligence. The highest-return applications reduce time-to-market while simultaneously improving targeting precision.

According to a Forrester Total Economic Impact study on AI-assisted content platforms, organizations using generative AI have substantially reduced content production times and accelerated campaign launches. That is a speed-to-market story, not a quality story, and in competitive categories, speed is a direct revenue driver.

Dynamic Content Personalization generates email, ad, and landing page variants per audience segment in real time — eliminating manual A/B test cycles and delivering higher CTR against static campaigns.

Creative Production at Scale generates campaign briefs, copy variations, and visual concepts for human refinement. The right lesson from Coca-Cola’s AI-powered creative work is not that AI replaced creative teams. It is AI allowed those teams to produce and test at volumes that were previously economically impossible. The human judgment layer moved from execution to curation.

Brand Sentiment Monitoring synthesizes signals from digital channels into actionable summary reports. Teams respond to brand risk faster when they are acting on synthesis rather than monitoring raw data feeds.

One note of operational honesty that most content on this topic skips: generative AI marketing use cases carry a hallucination risk that does not exist in the same form in manufacturing or healthcare. A predictive maintenance model failing produces a missed alert. A generative marketing model failing produces published content with false claims. The governance requirements are different here, not lower.

Why Are 95% of GenAI Pilots Failing to Reach Production?

The failure rate is not a technology problem. It is a data infrastructure problem compounded by poor use case selection.

As of mid-2025, 95% of enterprise GenAI pilots had failed to reach production. The primary causes, consistently across sectors, are poor data quality, the absence of business process context, and technical debt in legacy systems that could not support AI integration at the workflow level.

Recent Gartner research and industry summaries highlight that organizations with higher data governance and data readiness maturity achieve more sustainable, higher‑value AI outcomes than peers that focus primarily on tools or model choices.

The second failure pattern is use case selection based on demonstration quality rather than business impact. A compelling demo is not a validated use case. The organizations generating production returns picked workflows where three things were simultaneously true: the business problem was high-frequency, the current cost was measurable, and the data was available or achievable within 90 days. If any one of those conditions was missing, the pilot stalled. Explore our AI use case prioritization framework for a structured approach to making this selection.

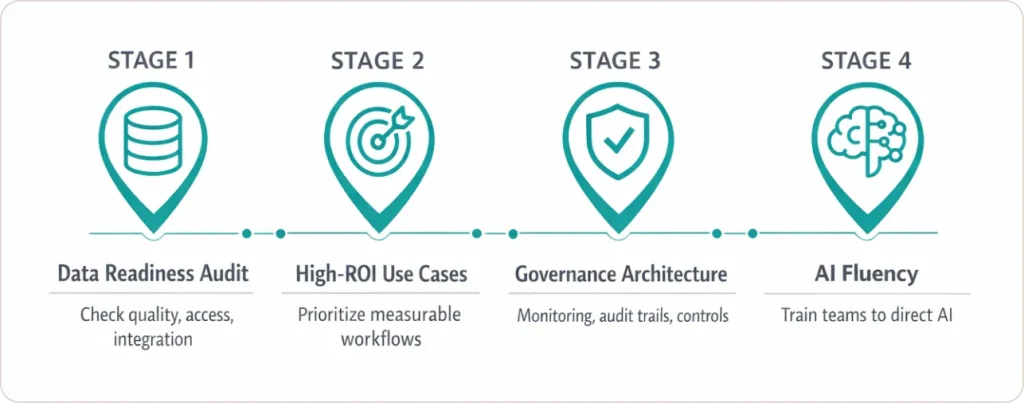

The SR Analytics 4-Stage AI Readiness Framework: From Pilot to Production

Most roadmaps give you a generic four-step list. This one is built around the specific failure modes that cause pilots to stall.

Stage 1: Data Readiness Audit. Assess your data infrastructure against three criteria before evaluating any model: quality (accurate and consistent?), access (reachable within compliance boundaries?), and integration (connectable to the workflows where AI will operate?). Skipping this is the single most common cause of pilot failure.

Stage 2: High-ROI Use Case Selection. Apply a three-factor filter: Is the problem high-frequency? Is the current cost measurable? Is the data available or achievable within 90 days? Prioritize 3 to 5 workflows that satisfy all three. If you cannot define the success metric before deployment begins, the use case is not ready.

Stage 3: Governance Architecture Before Scaling. As agentic AI enters mission-critical processes, governance becomes a board-level continuity concern. This means real-time monitoring, complete audit trails, and the technical capacity to halt agent actions when behavior deviates from defined parameters. Building this after deployment costs significantly more and creates liability exposure that pre-deployment architecture avoids.

Stage 4: AI Fluency Across the Organization. The skills gap is the most underestimated barrier in enterprise AI adoption. Technical teams need depth in model evaluation and integration architecture. Business teams need enough fluency to direct AI systems and audit outputs critically. Prompt engineering and human-AI collaboration are 2026 core competencies — not optional training. Organizations investing in fluency at both layers see substantially shorter time-to-value on every subsequent deployment.

Conclusion: What the Patterns Tell You

Across every industry covered in this post, one pattern holds. The organizations generating real returns from generative AI started with a specific problem and the data infrastructure to support it before selecting a model or a vendor.

The common denominator is not the technology. It is the decision made before the technology: what problem are we solving, do we have the data, and do we have the governance to run it at scale? That sequencing separates the 34% genuinely reimagining their operations from the 66% still running demos.

If you are in the evaluation phase, stop researching use cases and start auditing your data foundation. If you are stalled in pilot, the blocker is almost always data, not the model. And if you are ready to scale, the investment in governance and fluency is what makes every subsequent deployment faster.

Working with experienced generative AI consulting services is the fastest way to compress that learning curve and avoid the infrastructure mistakes that cause 95% of pilots to stall before production.

What Should You Do Next?

The organizations generating real returns from generative AI share one characteristic: they started with a specific problem and measurable data, not with a technology. The question is no longer whether to use generative AI. It is which workflows, with which data, toward which outcomes, and in what sequence.